The worldwide market revenue for AI is considered to grow significantly until 2030. While there are different forecasts, there are estimated market sizes between $126 billion and $554 billion in 2025 and $1500 billion in 2030. The European AI software market growth from $2.09 billion in 2018 to $26.52 billion by 2025 while the AI software segment has realized 88 percent of the total AI market revenue in 2020 (and even more in 2021).

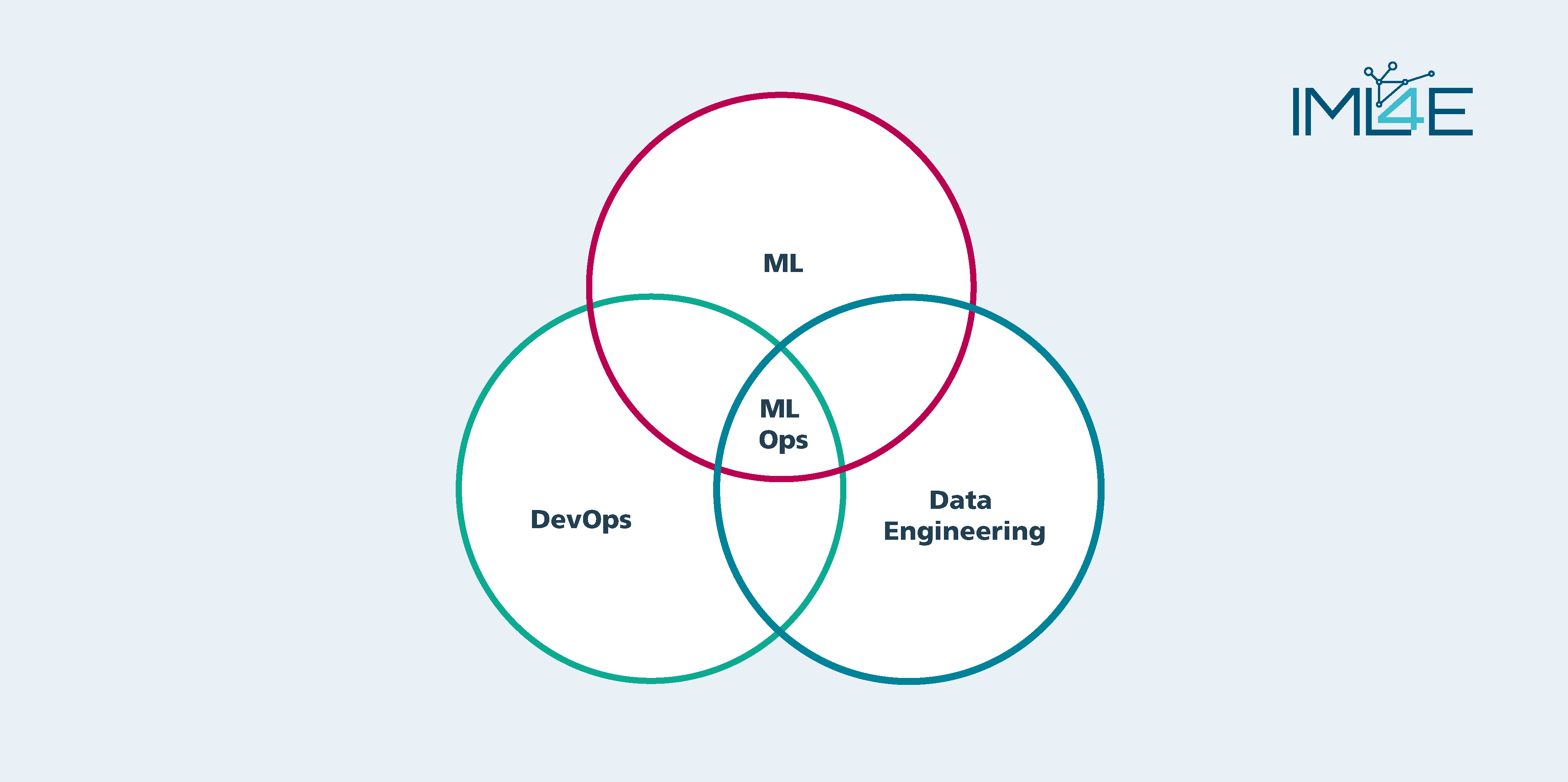

MLOps is a Collection of techniques, tools and processes for the deployment of ML models in production and thus the main transition path towards industrialized AI solutions. It combines DevOps, DataOps and ML by supporting the collection, selection, preparation and versioning of data, developing, selecting and integrating the most appropriate model, and finally deploying it to production. All combined in an automated tool chain and managed by coordinated processes

IML4E develops high quality and interoperable data preparation infrastructures for trustworthy ML and scalable MLOps techniques and tools for critical application domains. By providing

- faster time to market for ML-application,

- raising the level of trust in ML,

- fostering flexible adoption of MLOps for different Industries,

- ease auditability of the product, as well as

- reducing maintenance effort,

IML4E will finally leverage MLOps for the European industry.

On a technical level, IML4E will

- improve modularity and reuse of artifacts throughout the MLOps lifecycle,

- boost automation, interoperability, and tool support,

- promote the seamless integration between tools, methods, and techniques,

- foster European and international standards that harmonize tools, methods, and formats,

and thus, allow for flexible End-to-End MLOps solutions that match with the requirements from different industrial domains like industrial automation, healthcare, e-invoicing.